Trainees Edition

Trainers Edition

Trainees Edition

Trainers Edition

Module 15: Managing Filters

Module Description

The main purpose of this Module is to explain how to manage filters and what can be done to avoid them.

The secondary aim is to guide trainers who want to use the content of this Module to train their trainees.

With these aims, managing filters along with guidelines about how to teach the subject are presented.

Trainees who successfully complete this Module will be able to:

- understand what personalization and its types are

- understand the effects of personalization and filtering

- understand what users can do to avoid filter bubbles

- understand what platforms can do to avoid filter bubbles

Additionally, trainers who successfully complete this Module, will be able to demonstrate an understanding of how to teach managing filters and what can be done to avoid filter bubbles.

Module Structure

This Module consists of the following parts:

- Objective, Description of the Content and Learning Outcomes

- Structure of the Module

- Guidelines for Trainees

- Guidelines for Trainers (how to get prepared, methods to use and tips for trainers)

- Content (study materials and exercises)

- Quiz

- Resources (references and recommended sources and videos)

Main objectives of the Module, description of the content and the learning outcomes are explained in the Module Description part. Guidelines for Trainees includes instructions and suggestions for trainees. Guidelines for Trainers leads trainers through different phases of the training and provides tips which could be useful while teaching the subject. Content includes all study materials and the content related exercises. Quiz includes multiple choice and true/false questions for trainees to test their progress. Resources have two components: references and recommended sources for further reading and study. References is the list of sources cited in the content part. Recommended resources consist of a list of supplemental sources and videos which are highly recommended to read and watch for learning more on the topic.

Guidelines for Trainees

Trainees are expected to read the text, watch recommended videos and do the exercises. They can consult suggested resources for further information. After completing the study of the content trainees are strongly suggested to take the quiz to evaluate their progress. They can revise the study material if needed.

Guidelines for Trainers

Guidelines for trainers includes suggestions and tips for trainers about how to use the content of this Module to train people on the subject.

Getting Prepared

Preparing a presentation (PowerPoint/Prezi/Canva) which is enriched with visual materials (images and video clips) and clear solid examples is strongly suggested. It is also suggested to adapt the examples and exercises in this Module to issues which are more familiar to the actual target group. Choosing local examples (country specific) regarding the current or well known issues help to illustrate a point more clearly. It also helps to draw the attention of trainees. The more familiar and popular the examples are, the better the message will be communicated.

Getting Started

A short quiz (3 to 5 questions) in Kahoot or questions with Mentimeter can be used at the beginning for engaging participants in the topic. It can be used as a motivation tool as well as a tool to check trainees’ existing knowledge about the subject. Some examples for questions could be: What is personalization? What impact can personalization have on users?

Methods to Use

Various teaching methods can be used in combination during the training. Such as:

- Lecturing

- Discussion

- Group work

- Self reflection

Tips for Trainers

Warming-up

An effective way of involving participants and setting common expectations about what they will learn is to ask a few preliminary questions on the subject. This can be done through group work by asking trainees to discuss and collect ideas, but also individually by asking each participant to write their ideas on sticky notes. The activity can be conducted as follows:

- Ask trainees

- whether they make personalization on the platforms they use (such as Google, Facebook, YouTube, etc.)

- whether they are aware of the personalization of the algorithms on the platforms they use (such as Google, Facebook, YouTube, etc.)

- whether they themselves do anything to avoid the personalization made by the algorithms on the platforms they use

- whether they are aware that they are under the influence of filter bubbles or echo chambers

Presenting the Objective of the Lesson

The objective of the lesson should be made clear (which is how to manage filters and what can be done to avoid them). Following the warming-up questions it will be easier to clarify the objectives.

Presenting the Lesson Content

While presenting the content make sure to interact with the trainees and encourage them for active participation.

- After explaining personalization and its types, ask participants if they are aware of personalization.

- When mentioning different results from search engines for the same search, support your claim with examples of searches, if possible, by different people and in different locations (countries).

- Explain the effects of using sources with the same perspective when looking for news or information on any subject, by associating them with filter bubbles and echo chambers.

- Emphasize why it is important to be individuals aware of personalization and filtering. Talk about its importance both for the individual and for a democratic society.

Concluding

Make a short summary of the lesson and ask a couple of questions which help underlying the most important messages you would like to give.

Following question can help:

- Ask trainees what users can do to avoid filter bubbles.

- Ask trainees what platforms can do to avoid filter bubbles.

When concluding, make sure that trainees understand the effects of personalization and filtering.

Content: Managing Filters

Introduction

What the algorithms are, how they work, their pros and cons, their effects, their connection with news feeds, and detailed information about filter bubbles and echo chambers are explained in Module 6. In this module, it is focused on what can be done to avoid the filters used in algorithms.

Today, we mostly depend on algorithmic personalization and recommendations (like Google's personalized results and Facebook news feed, which decides for us who sees updates) (Pariser, 2011a). The algorithms used make these choices using the data collected by the platforms based on our past use and the data we voluntarily give to the platforms (Fletcher, n.d.). At this point, it is worth mentioning the distinction between self-selected personalization and pre-selected personalization.

Self-selected personalization refers to personalization that we do voluntarily, and such personalization is especially important when it comes to news use. People are always making various decisions to personalize their news use (for example, which newspapers to buy, which TV channels to watch, and which to avoid). This situation is also called “selective exposure” and it is affected by a number of different things, such as people’s interest in news, their political beliefs, etc. (Fletcher, n.d.).

Pre-selected personalization, on the other hand, is personalization done to people, sometimes by algorithms and sometimes without their knowledge. This is directly related to filter bubbles. Because algorithms make choices on behalf of people, but people may not be aware of it (Fletcher, n.d.).

For many users, personalizing search results is helpful and convenient. On the other hand, many users are uncomfortable with the fact that the sites they encounter are shaped by forces beyond their control (Ensor, 2017). Essentially, focusing on providing and consuming content that closely aligns with your preferences can create a bubble or chamber that limits your view of the wider picture (Ensor, 2017).

People have a specific purpose in using search engines to access news, and that is to find a specific news. However, when you search for a particular topic, it may be the case that search engines use algorithmic selection based on data collected about your past usages. So when people log into search engines, there is a possibility that algorithmic selection will keep them in a filter bubble (Fletcher, n.d.). In 2011, it was stated that Google offers personalized results by looking at 57 different signals (from where users are to what type of browser they are using) (Pariser, 2011a). Today, it is stated that more than 200 factors are taken into account when deciding the relevance level of the results to be listed on Google (Dean, 2021). However, it's not entirely clear what other kind of algorithm Google uses, literally.

The effects of personalization on Google are illustrated in the examples below. The first example is with a logged-in user from Turkey. The second is from an anonymous user from Turkey. The last one is from an anonymous user from the US.

Source: Google Search for “US presidential election” with logged-in from Turkey

Source: Google Search for “US presidential election” with anonymous from Turkey

Source: Google Search for “US presidential election” with anonymous from the USA

Social media platforms, on the other hand, often combine self-selected personalization with pre-selected personalization. However, users’ preferences about which news organizations they follow or not are also known. On the other hand, it is also possible for algorithms to hide news from people they are not interested in or from platforms they do not particularly like (Fletcher, n.d.).

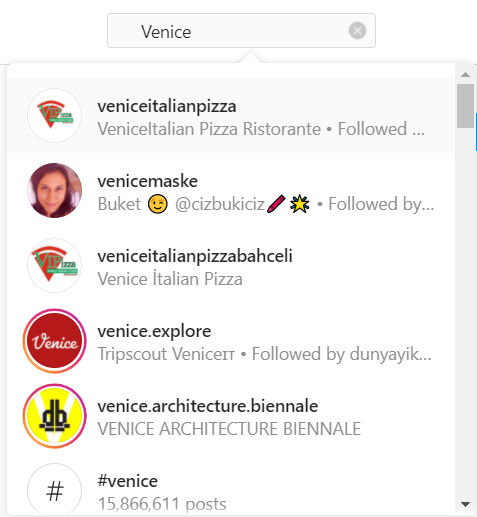

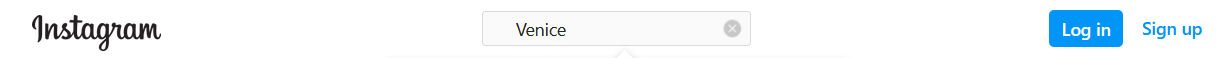

The effects of personalization on Instagram are illustrated in the examples below. The first example is with a logged-in user from Turkey and the second is from an anonymous user from Turkey.

Source: Instagram Search for “Venice” with logged-in from Turkey

Source: Instagram Search for “Venice” with anonymous from Turkey

Most of the platforms do not provide transparent information about their algorithms running in the background. It is stated that the algorithms of search engines, social networking platforms, and other major online intermediaries reduce the variety of information that individuals can access through filter bubbles and use it for different purposes, which may pose a great threat to democracy (Bozdağ & van den Hoven, 2015, p. 249). The non-transparency of the filters used limits the freedom of choice, and thanks to the insight obtained with the numerous data revealed as a result of the information behaviors of the users, individuals are classified in various categories whose definition is not transparent. On the other hand, citizens need to be aware of different views and options so that they can evaluate among different options and make reasonable decisions. However, the algorithms decide the content to be accessed on behalf of the users, without their knowledge or seeing what the other content is, which prevents this.

How Can We Avoid Filter Bubbles?

The only way to get rid of filter bubbles completely is to stop using Google, other social media platforms and news platforms (Ensor, 2017). However, this is not a very realistic solution. Although it is not possible to completely escape from the algorithms and the filter bubbles and echo chambers that are their reflections, there are some issues that both users and platforms should pay attention to.

What Can Users Do?

1. Feeding from different sources instead of depending on one or a few sources:

Echo chambers existed before Google and Facebook. For example, newspapers have been reporting the news with their own bias for years. This can be seen from the differences in the interpretations that newspapers and news platforms make of what is going on in the world (Ensor, 2017). Following news sites that aim to offer a broad perspective can prevent you from falling into the prejudices of the platforms. Regardless of the sources we frequently use, a quick glance at the front pages of the resources will give you an idea of any bias (Farnam Street, n.d.). The most powerful tool to escape the filter bubble of platforms like Google is one’s own awareness of the situation. If you are searching for important information, you should try to use multiple sources and look at the situation objectively (Ensor, 2017).

It is not easy to break habits, change the news sources that are frequently checked every day, or add new ones instead. However, diversifying your way online from time to time significantly increases your chances of meeting new ideas and people (Pariser, 2011b, p. 122).

It is possible to say that the filter bubbles cause or enable social segregation by politics, that exposing people to content about alternative political perspectives will reduce political extremism and this is extremely important in dealing with polarization (Stray, 2012). Being hungry for truth is the most important aspect of overcoming filter bubbles. It is extremely important for everyone to have all sides on the issues and to read the problems from multiple sources in order to prevent democracy from being threatened (Allred, 2018).

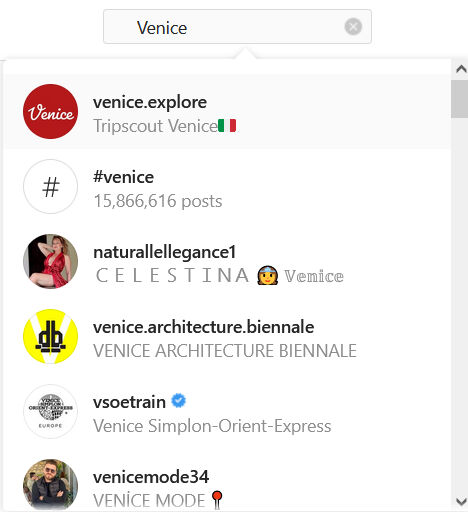

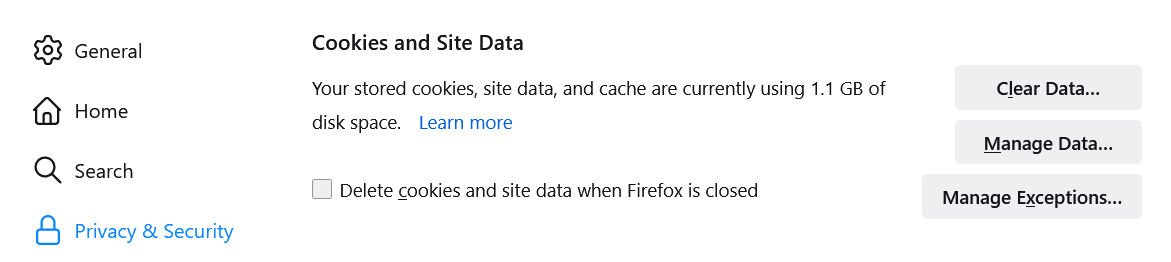

2. To delete or block browser cookies:

Many websites place “cookies” (small text files) every time we visit them. These cookies are then used to determine what content is shown to us. Regularly deleting the cookies that your internet browser uses to identify who you are is a partial solution (Farnam Street, n.d.; Pariser, 2011b, p. 122).

Chrome - Settings - Privacy and Security - Cookies and Other Site Data

Firefox - Settings - Privacy & Security - Cookies and Site Data

Cookies can be deleted manually (select “Settings”, then select delete cookies in “Privacy and Security” section). There are also browser extensions that remove cookies (Farnam Street, n.d.).

In addition to clearing cookies and your search/browser history, you can also do what you need to do online without logging into your accounts (for example, without logging into gmail) (Farnam Street, n.d.; Pariser, 2011b, p. 122).

Additionally, you can run all your online activities in an “incognito” window, where less of your personal information is stored. However, this does not seem like a guaranteed way as most services will not work as they should (Pariser, 2011b, p. 122). Private Browsing works by allowing you to search in an incognito window without saving passwords, cookies and browsing history. However, it does not hide your identity or online activity. Websites and Internet service providers can collect information about your visit even if you are not logged in (“Common myths about private”, n.d.; Google Chrome help, 2021). When you log into any of your favorite Facebook, Amazon, Gmail and similar sites in incognito mode, your actions will no longer be anonymous or temporary. While cookies and tracking data are deleted when your private session ends, they can still be used while the session is active, linking your activities between various accounts and profiles. In this case, for example, if you’re logged into Facebook, Facebook can see what you’re doing on other sites and adjust its ads accordingly, even in incognito mode. The same is true for Google (Nield, 2020). This prevents you from being completely free. Because the filter bubble isn’t just specific to online personal activities, it also takes into account personal factors such as device and location (Ensor, 2017).

Other approaches to keep any tracking to a minimum are choosing a privacy-focused browser, choosing sites that give users more control and visibility over how their filters work and how they use personal information, using search engines that don't mine your data (such as DuckDuckGo, StartPage) or installing a reliable VPN (virtual private network) program (Nield, 2020; Pariser, 2011b, p. 122).

Source: DuckDuckGo Search

3. Using ad-blocking browser extensions:

These extensions remove most of the ads from the websites we visit. However, most sites rely on ad revenue to support their work. Some sites insist that users disable ad blockers before viewing a page. These are the disadvantageous sides of ad-blocking browser extensions (Farnam Street, n.d.).

4. Using softwares to burst your filter bubble:

Using apps or browser extensions like Escape Your Bubble (Chrome extension), Read Across The Aisle (Chrome extension), PolitEcho (Chrome extension) can also help avoid filter bubbles.

5. Training:

One of the biggest problems with filter bubbles is that most people don’t even know what filter bubbles are. Individuals who do not know this will not be able to take the necessary steps to investigate the truth, as they may not be aware that their results are personalized. Therefore, raising awareness among individuals about filter bubbles and how we are manipulated by them, consuming information from various reliable sites, searching for multiple aspects of arguments through training will help reduce the negative effects of filter bubbles (Allred, 2018). It should be ensured that individuals learn to perform advanced searches using search assistants in their searches on Google or other resources (Cisek & Krakowska, 2018). In this regard, it is extremely important for individuals to acquire information literacy and news literacy skills.

On the other hand, it is also becoming important to develop algorithmic literacy at a basic level. Increasingly, citizens will have to make judgments about algorithmic systems that affect our public and national lives. Even if they're not fluent enough to read thousands of lines of code, it’s helpful to learn the basics (like how to manipulate variables, loops, and memory), how these systems work, and where they can make mistakes (Pariser, 2011b, p. 124).

Changing our own behavior as individuals, in other words as users, is part of the process of tackling filter bubbles. However, this alone is not enough. Platforms that take personalization forward also have some points to consider (Pariser, 2011b, p. 125).

What Can Platforms Do?

In the fight against filter bubbles, first of all, creators of social media platforms and other information sources should try to create unbiased websites and be conscious of the civic duties they impose on their algorithms (Allred, 2018).

Another important step is for platforms to try to make their filtering systems more transparent to the public. Thus, it may be possible to have a discussion about how they fulfilled their responsibilities in the first place. Even if complete transparency cannot be achieved, it is possible for these platforms to make it clearer how they approach sorting and filtering issues (Pariser, 2011b, p. 125). Transparency is not just about making the operation of a system public. It also means that users intuitively understand how the system works. This is a necessary precondition for people to control and use these tools, rather than for tools to control and use us (Pariser, 2011b, p. 126).

It is also extremely important for platforms to explain how they use data (such as which pieces of information are personalized to what extent and on what basis) and to be transparent about it (Pariser, 2011b, p. 126).

When it comes to today's large and fast flow of information, it is obvious that it is necessary to choose among them. The question here is how to choose what (which information) anyone should see. Although there is no clear answer to this, platforms may try to offer them different possibilities rather than trying to choose for someone else (Stray, 2012).

In summary, the most important task for users in avoiding filter bubbles is to be aware of filter bubbles and to feed on information from different sources. The most important task of the platforms is to provide transparency about the working logic of the algorithms they use and to inform their users about what data they collect about themselves and for what purpose.

Exercises

Exercise 1

Exercise 2

Quiz

References

Allred, K. (2018, April 13). The causes and effects of “filter bubbles” and how to break free. Medium.

Bozdağ, E. & van den Hoven, J. (2015). Breaking the filter bubble: Democracy and design. Ethics and Information Technology, 17, 249-265.

Cisek, S. & Krakowska, M. (2018). The filter bubble: A perspective for information behaviour research. Paper presented at ISIC 2018 Conference.

Common myths about private browsing. (n.d.). Support Mozilla.

Dean, B. (2021, October 10). Google’s 200 ranking factors: The complete list (2021). Backlinko.

Ensor, S. (2017, August 18). How to escape Google's filter bubble. Search Engine Watch.

Farnam Street. (n.d.). How filter bubbles distort reality: Everything you need to know [Blog post].

Fletcher, R. (n.d.). The truth behind filter bubbles: Bursting some myths. Reuters Institute for the Study of Journalism.

Google Chrome help. (2021). Browse in private.

Nield, D. (2020, February 8). Incognito mode may not work the way you think it does. Wired.

Pariser, E. (2011a, March). Beware online “filter bubbles. TED Talks.

Pariser, E. (2011b). The filter bubble: What the Internet is hiding from you. The Penguin Press.

Stray, J. (2012, July 11). Are we stuck in filter bubbles? Here are five potential paths out. NiemanLab.

Recommended Sources

Lanier, J. (n.d.). Agents of alienation.

Piore, A. (2018, August 22). Technologists are trying to fix the “filter bubble” problem that tech helped create. MIT Technology Review.

Sunstein, C. (2007). Republic.com 2.0. Princeton University Press.

Recommended Video

Pariser, E. (2019, July). What obligation do social media platforms have to the greater good [Video]. TED Talks.